I still remember the first time I trusted an AI answer without checking it. It gave me a perfectly written explanation that sounded smart and polished, but later I found out it was completely wrong. That moment pushed me to understand what is AI hallucination explained and why AI can sound so confident while being incorrect.

If you use AI tools daily like I do, this is something you need to understand. It is not about avoiding AI. It is about using it smarter so you do not fall into the trap of believing everything it says.

Table of Contents

ToggleWhat Is AI Hallucination Explained In Simple Terms

AI hallucination is a phenomenon where a generative AI model produces a response that sounds confident and well structured but contains incorrect or fabricated information. It is not a glitch in the traditional sense. It is a natural result of how these systems are built.

Instead of knowing facts like humans do, AI predicts text based on patterns. That means it can generate answers that feel real even when they are not. This is why hallucinations often go unnoticed at first.

Understanding Why AI Feels Believable

The reason AI feels convincing is because it focuses on language fluency. It builds responses that sound natural and complete. This makes even incorrect answers appear trustworthy. From my experience, the more detailed the response, the harder it becomes to detect mistakes. That is why awareness is your first line of defense when using AI tools.

Why Hallucinations Happen In AI Systems

AI models do not understand the truth. They act as prediction engines that generate the most likely next word in a sequence. This means accuracy is not guaranteed, especially when the model faces unfamiliar or unclear inputs.

Several technical factors contribute to hallucinations. These include how the AI model was trained, the quality of data it learned from, and how it processes new information.

Next Word Prediction Causes Confident Mistakes

AI systems rely heavily on predicting the next word based on patterns. If there is a knowledge gap, the model fills it by guessing what sounds right. This is why you might see answers that are grammatically perfect but factually wrong. The system prioritizes flow over correctness.

Training Data Limitations Affect Accuracy

AI learns from massive datasets, but those datasets are not perfect. They can be outdated, biased, or incomplete.

For example, if a model lacks exposure to certain cultures or languages, it may produce inaccurate or generalized responses. I have noticed this especially when asking niche or region specific questions.

Lack Of Real World Grounding Creates Gaps

AI does not experience the real world. It cannot verify facts by observation or testing. It only relies on patterns from data. This lack of grounding means it cannot confirm whether something is true or false in real time. That limitation plays a major role in hallucinations.

Overfitting Reduces Flexibility

Overfitting happens when a model memorizes patterns too closely. It struggles when faced with new or unfamiliar data. Instead of adapting, it forces existing patterns onto new situations. This often leads to difficulty in trust and reliance on AI with incorrect conclusions that still sound logical.

AI Hallucination Explained With Real Examples

Understanding examples makes everything clearer. One of the most common cases is fabricated citations. AI may generate a research paper with a title, author, and date that look real but do not exist.

Another example is mathematical reasoning. AI might show a step by step solution that appears logical but ends with a wrong answer. I have personally seen this happen when testing simple calculations.

Inconsistent Logic In Conversations

Sometimes AI contradicts itself within the same conversation. It may give one answer and later provide a different version of the same fact. This happens because it does not track truth consistently. It generates responses independently based on the prompt.

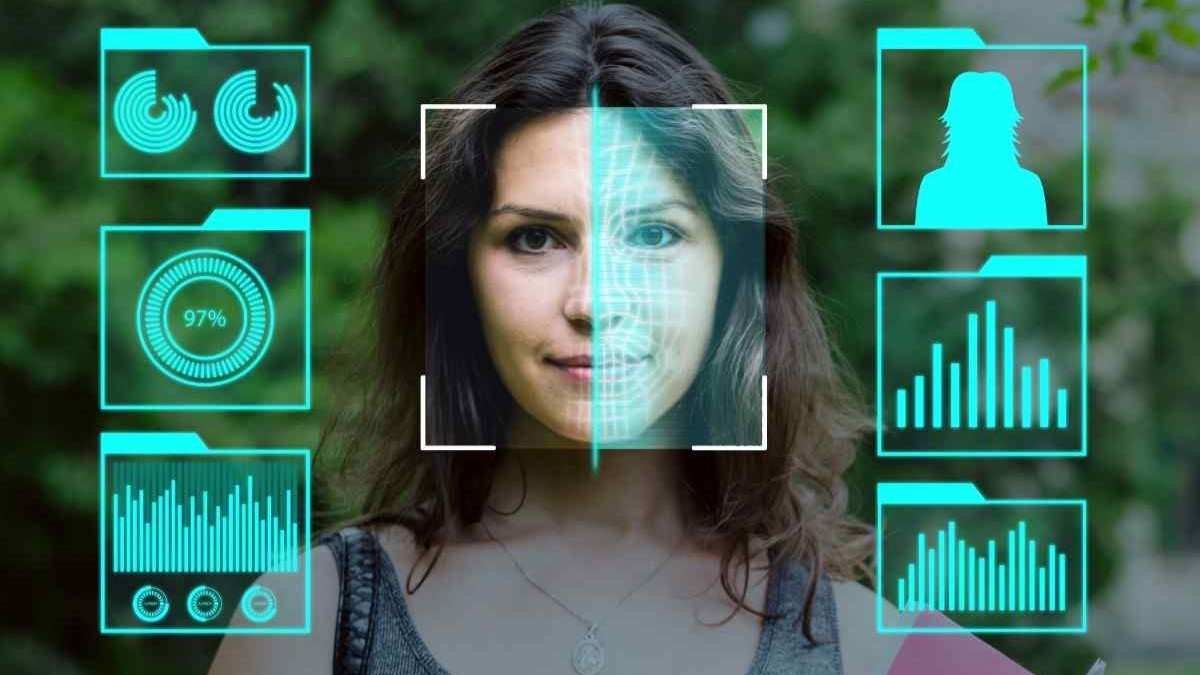

Visual hallucinations in AI tools

Image generators also experience hallucinations. You might see extra fingers on hands or impossible structures in buildings. These errors happen because the model tries to recreate patterns without fully understanding physical reality.

How To Understand What Is AI Hallucination Explained Step By Step

First, I always start by questioning the output. If something sounds too perfect or overly confident, I take a moment to verify it. This habit alone has saved me from multiple mistakes.

Next, I cross check information using trusted sources. Even a quick search can confirm whether the AI response is accurate or not. This step is especially important for important decisions.

Then, I improve my prompts by adding context and clarity. When I provide detailed instructions, the AI produces more reliable results. Vague prompts increase the chances of hallucination.

Finally, I treat AI as a support tool rather than a final authority. I use it to generate ideas, but I take responsibility for validating the information before using it.

Over time, I have learned to combine AI speed with human judgment. This balance makes a huge difference in accuracy. When you use AI with awareness, you get the benefits without falling into the trap of blind trust.

How To Mitigate AI Hallucinations Effectively

While hallucinations cannot be completely eliminated, there are ways to reduce them.

One effective method is retrieval augmented generation. This connects AI to real time data sources so it can pull verified information instead of guessing.

Another approach is keeping humans involved in the process. Reviewing AI outputs before using them ensures accuracy in critical situations.

Prompt engineering also plays a key role. When you give clear instructions and constraints, the AI has less room to make incorrect assumptions. Lowering randomness settings in some tools can also improve reliability.

Frequently Asked Questions

1. What is AI hallucination explained in simple terms?

It is when AI generates incorrect or made up information while sounding confident and accurate.

2. Why does AI hallucinate even when it sounds correct?

Because it predicts patterns in language rather than verifying facts. Fluency does not equal accuracy.

3. Can AI hallucinations be completely avoided?

No, but you can reduce them by using clear prompts and verifying outputs.

4. Is AI hallucination dangerous?

It can be risky in areas like healthcare, finance, and legal advice if not checked properly.

AI still hallucinating?

Now that you understand what is AI hallucination explained, you can approach AI with a smarter mindset. I always remind myself that AI is a powerful assistant, not a perfect source of truth. When you question, verify, and refine, you turn AI into a reliable tool instead of a risky shortcut. That simple shift changes everything.